The app stores are flooded with colorful math games that promise to make learning “fun.” They use flashing lights, digital stickers, and catchy music to keep kids clicking. But there is a quiet crisis happening in African education: children are spending hours “playing” these apps without actually improving their math skills.

The truth is, most learning apps fail because they confuse keeping a child busy with building a child’s brain.

1. The Trap of “Empty” Gamification

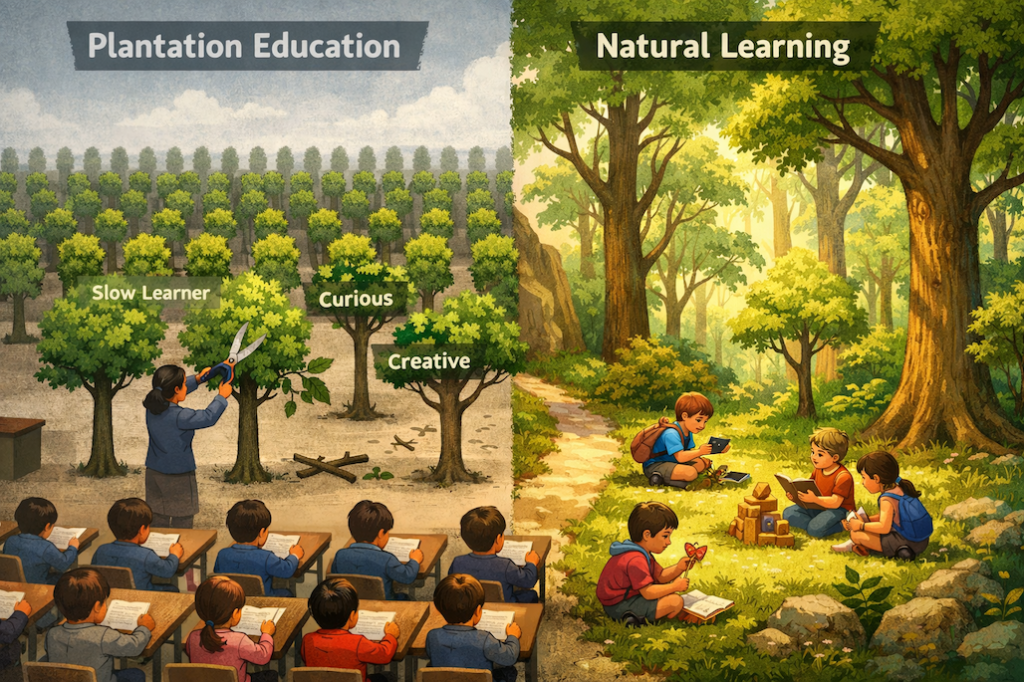

Gamification—using game-like elements in non-game contexts—can be a powerful tool. However, when it stands alone, it creates a “sugar high.” A child might earn a virtual trophy for answering ten easy questions, but if those questions don’t push their boundaries, no real learning has occurred.

If an app focuses more on the reward than the competency-based challenge, it’s a toy, not a teacher. High-impact systems know that the real “fun” in learning comes from the dopamine hit of finally mastering a difficult concept, not just collecting digital coins.

2. The Missing Layer: Measurable Skill Progression

What separates a “game” from a precision learning system is the data. Most apps fail to provide a clear, measurable path of progress.

- The Problem: Parents see their child “on the app” and assume they are learning.

- The Reality: Without learning analytics, you have no idea if they are stuck on the same level or if they are truly building a foundation for future STEM success.

- The Solution: An effective system must track micro-skills—breaking down complex math into small, measurable steps that show exactly where a child stands.

3. The Trifecta of Effective Learning

To move the needle on math skills in Africa, a digital system needs three things that go far beyond basic engagement:

- Instant Feedback: Correcting errors the moment they happen so wrong logic doesn’t take root.

- Structure: A logical flow that follows a competency-based curriculum, ensuring no gaps are left behind.

- Adaptation: The system must be “smart” enough to get harder when the student is bored and easier when they are frustrated. This is the heart of adaptive learning.

Beyond the “Play” Button

We need to stop asking if an app is “fun” and start asking if it is effective. High-impact learning isn’t about how many levels a child finishes; it’s about how much deeper their understanding is today than it was yesterday.

Choose Impact Over Entertainment

Boldungu was built to solve the “engagement trap.” To provide a high-impact, precision learning system that prioritizes real math mastery through data, feedback, and structured growth.

- Move beyond games: Visit boldungu.com to see our high-impact approach.

- Start measuring mastery: Download Boldungu from the Google Play Store.

![]()